Rozlyn Redd, Helen Ward

8 November 2021

On September 17th the People Like You team held a public event at Kings Place, London, on Contemporary Figures of Personalisation. It was an opportunity to showcase some of the work of our project, incuding presentations from three artists in residence, and to discuss key issues in personalisation across a range of sectors.

One vivid image that clearly resonated with people across the event was “those shoes that follow you round the internet.” Pithily brought up by a panelist describing the practice of similar adverts reappearing across multiple platforms, in the final session the image reappeared and turned into “these boots are made for stalking”. The shoes provided an example to grapple with the techiques that underpin personalisation, such as the harvesting and sale of browsing data, or the use of predictive algorithms to finely segment users based on preference and similarity to others. These processes, including the creation and marketing of data assets, are common across the many sectors where personalisation is practiced.

In this blog we briefly describe the sessions, although cannot do justice to the richness and scope of the day. Neither can we easily convey the enjoyable, humourous and collaborative atmosphere. As the first in-person event many of us had attended since the beginning of the pandemic, it was also a great chance to connect with people through conversation.

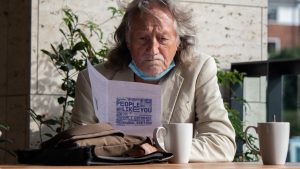

For a brief look at the day browse the gallery here

Portraits of people like you: “trying to be a person is a piece of work”

The morning started with presentations from our resident artists and illuminated themes from the People Like You programme as a whole. Felicity Allen presented her Dialogic Portraits, some of which were also on display, and a linked film, Figure to Ground – a site losing its system. These watercolour portraits emerge out of conversations with her sitters, while the film allowed these sitters to explore their own participation. Felicity’s reflections on how a person might be figured in a portrait – which might be a painting but, in our other artists’ work, might also be a poem or a data set – permeated the day’s discussions about artistic practice. As Flick explained, “In this project I’ve often thought of the brushstroke as a form of data which builds a picture of a person… So what type of brushstroke most resembles data, and whose data is it, the sitter’s or mine?”

This relationship between the person and data is a theme that travels across the People Like You project. Stefanie Posavec’s work, Data Murmurations: Points in Flight, presented a series of visualisations of an epidemiological study, Airwave, which includes data and samples from a cohort of research participants. This work started by asking “How is the original donating person (participant) figured at every step of the collection and research process?” By starting with a stakeholder – an investigator, researcher, nurse, or participant – and asking how they perceive people behind the numbers, Stef’s captivating lines, boxes, swirls, and dots provide us with a creative representation of a complex system.

Di Sherlock’s presentation of her Written Portraits included wonderful readings from three actors who brought the works to life. Di recalled conversations with her sitters at a cancer charity and NHS hospital, and their responses to the poems she later “gave” to them. She described the responses which related to whether they “liked” the work, and whether they felt it was “like” them. The difference and similarity between like (preference) and like (similarity) is another theme that permeates the People Like You collaboration.

In conversation with the artists after their presentations, Lucy Kimbell encouraged them to share more of their experiences of working in the project, asking how it affected their art and autonomy. Our intention in including artists in the collaborative research programme was to learn from their creative process and to better understand issues of personhood and the relations between self and collective identities.

Personalisation in Practice

The next panel discussed how personalisation is understood and practiced in different sectors, with speakers from public policy (Jon Ainger), advertising (Sandeep Ahluwalia), and public health (Deborah Ashby).

As the panellists introduced themselves, each highlighted key aspects and tensions inherent to personalisation within their field. For Sandeep, advertising firms must learn how to synthesise signals and use new tools like AI and machine learning to produce personalised marketing – a process that has changed dramatically in the past 5 years.

For Deborah, personalisation has three moments in the field of public health and medicine: first, how different treatments work differently in different people; second, and most common, traditional forms of segmentation and stratification, where different patients are prescribed different treatments based on overall risk; third, how an individual values risk themselves, which is highly context specific. The relationship between personalisation, the individual and community is also turned on end in public health: what does personalisation mean when our actions and decisions impact other people?

Jon gave the example of a mother advocating for her disabled son’s delivery of social care. For her, choice was the key for her to be able to satisfy her son’s needs: “If I don’t have any agency, if I’m not in control, if I’m not in complete control, you can never give me enough money to meet my need as a parent.” For him, personalisation is about knowing an entire person, and getting people more involved in making decisions increases the effectiveness of services. Here, personalisation is about engagement, but he also argues that data is important too. There are critical limitations on how data can be applied in social care: should data stratification play a role in children’s social care? When is it going too far? How do we decide to set limits to data-based solutions to a problem?

One theme drawn out by the discussion is that personalisation is bespoke – it is not fully automated. For Sandeep, one of the challenges of personalisation in the digital space is that there is a difference between what brands want to communicate and what their audience is interested in. As a practitioner, finding a Venn diagram of their overlapping interests is crucial. Jon argued that in social care, there are very complex issues that are continually being disrupted due to changes in policy, relationships between sectors, and adapting processes to particular situations. Deborah argued that while algorithms can be used to understand patterns, we should be wary of using them to automate decisions, as they can bake inequality into a decision-making process.

A second theme that was brought up was inclusion in data. For Sandeep, personalisation often fails when lists of target individuals are scaled up too far. Within healthcare, Deborah argued, you need to think about who is not in data. For example, with routinely collected data, those who are most represented in the data might be those with the least amount of need.

On the whole, this discussion reflected the findings of our team’s ‘What is Personalisation?’ study, led by William Viney, which asked if personalisation could be understood as a unified process. Personalisation varies across domains, and this variance is driven by practical challenges, resource limitations, and context-specific histories.

Any Questions?

The final panel addressed the future of personalisation, with Timandra Harkness putting audience questions to Paul Mason (writer and journalist), Reema Patel (Ada Lovelace Institute), Natalie Banner (formerly lead Understanding Patient Data, Wellcome Trust), and Rosa Curling (Foxglove). The questions were wide-ranging and answers by panellists provocative.

“Is overpersonalisation killing personalisation?” In response to this opening challenge, the panelists focused on power and how it is distributed in this space: who collects data about people, whether use of personal data leads to overdetermination, and when it feels like it’s too personal: “the shoes that follow you around the internet.” Discussing the future of personalistion meant considering how the UK government will act on data protection, data sharing and consent in the future. This was particularly true for answering the audience question, “How will people think about sharing their healthcare data for research in 10 years time?” Rosa challenged the government goal of centralising GP health records and argued that there needs to be more friction in this decision. Does it need to be centralised? Who benefits if it is? Could it be federated? Who will have access? Instead of centralising GP data, local authorities could create a more democratic space through localised consent and control, and through this process create trust in the NHS and data sharing in the future. Reema argued that the current pattern of techlash between the UK government and the public – caused by data privacy policies that have been met with hostile reactions by the public and then government quick fixes – has undermined public confidence and trust in shared data for the future (e.g. care.data, GP GDPR). For Natalie, in ten years there is potential for citizens to be much more aware about the contents of their healthcare data and how health records can be used to improve the healthcare system and their own lives. Paul countered that other forms of health data (e.g. health apps and lifestyle tracking) could make NHS data irrelevant.

When tasked with thinking through the issue of using big data for calculating health risk, Reema gave the example of the Shielded Patients List as a potentially valuable application), where a risk score was created and used to direct resources during an emergency response. Natalie argued that there is a vast difference between being able to see a pattern in data (which happens now) and being able to make an accurate prediction from it. Following up Natalie’s response, Timandra asked the panellists, is it ok to make predictions about individuals based on aggregate trends in data? For Rosa, these issues are not new with the advent of personalisation: there have always been racist decision-making processes, and algorithms are just a new machine working within an existing power structure in a way that harms the most vulnerable members of the community.

The panellists also discussed a range of examples where personalisation is related to social integration and access: social credit scoring; BBC recommendation algorithms focused on helping users explore diverse content and the hope of bringing people together; personalisation of access for those who have varying interests and needs; the tendency of algorithms to bring together communities of outrage rather than those of difference or nuance; the recent example of communities coming together during the pandemic around health issues- and the opening of dialogue around the current tension between individual liberty and public health outcomes.

What kind of consciousness will tech acceleration and the continuous prompt to express preference bring? … How is it changing us? Reema suggested that when you introduce something new it shapes the way people behave and feel, especially felt when prediction determines your future. Rosa was more hopeful: with more exposure to the harms that come with algorithms, like the A-level fiasco, where the government appeared not to care, people are now conscious that these kinds of things are happening around us all the time, and we should be thinking about them. Natalie thought that many people are unaware of the kinds of concerns that we have brought up in this conversation – she felt it’s novel and scary.

The conversation reflected key aspects of our research agenda in People Like You: how do we think about participation, data, algorithms, and inequality as facets of personalisation? What can we learn from talking about the future of personalisation across domains and time? As it turns out, quite a lot.